The Runtime Schema Cast operator casts its input schema to its configured target output schema at run time. This operator is used in nested modules to expand the subfields within a capture field, either by flattening or nesting the captured subfields. Flattened subfields appear as top-level fields of the target schema, while nested fields appear as subfields of a top-level tuple field whose name matches that of the captured field.

This operator is likely to be used in modules that use capture fields in their input schema or table definitions. Such modules serve as generic interface modules that are transformed at run time into specific uses.

For example, the OrderMatcher.sbapp module in the Capture Fields and Parent Schemas sample can be used to process orders using any financial instrument type. The TopLevelFX.sbapp module in that sample calls the Order Matcher module in a way that processes orders for FX trades. In this example as delivered,

there is no need for a Runtime Schema Cast Operator.

Now consider a different Order Matcher module, perhaps named OrderMatcherWithSpecialHandling.sbapp that handles orders generically for all instrument types, but also has special handling for FX orders. Inside such a module,

processing might be split into two branches, one for FX orders, the other for all other order types. The FX processing branch

could start with a Runtime Schema Cast Operator that extracts the capture field data from the module's input stream, using

either FLATTEN or NEST transform strategy. See Client Access to Capture Fields.

This section describes the properties you can set for a runtime schema cast operator, using the various tabs of the Properties view in StreamBase Studio.

This section describes the properties on the General tab in the Properties view for the Runtime Schema Cast operator.

Name: Use this required field to specify or change the name of this instance of this component. The name must be unique within the current EventFlow module. The name can contain alphanumeric characters, underscores, and escaped special characters. Special characters can be escaped as described in Identifier Naming Rules. The first character must be alphabetic or an underscore.

Operator: A read-only field that shows the formal name of the operator.

Class name: Shows the fully qualified class name that implements the functionality of this operator. If you need to reference this class name elsewhere in your application, you can right-click this field and select Copy from the context menu to place the full class name in the system clipboard.

Start options: This field provides a link to the Cluster Aware tab, where you configure the conditions under which this operator starts.

Enable Error Output Port: Select this checkbox to add an Error Port to this component. In the EventFlow canvas, the Error Port shows as a red output port, always the last port for the component. See Using Error Ports to learn about Error Ports.

Description: Optionally, enter text to briefly describe the purpose and function of the component. In the EventFlow Editor canvas, you can see the description by pressing Ctrl while the component's tooltip is displayed.

This section describes the properties on the Operator Properties tab in the Properties view for the Runtime Schema Cast operator.

| Property | Description |

|---|---|

| Capture Transform Strategy | Set to FLATTEN to have captured fields appear as top-level fields of the output schema and NEST to have captured fields appear as subfields of a top-level tuple field.

|

For the Runtime Schema Cast operator, use the Edit Schema tab to specify the schema of the operator's output port. The schema must match the input schema after being transformed as specified by the Capture Field Strategy property. Since the transformation occurs at runtime, errors in specifying the output schema are detected at runtime and cause the operator to fail to start.

Use the Edit Schemas tab much like other Edit Schemas tabs throughout StreamBase Studio:

-

Use the control at the top of the Edit Schemas tab to specify the schema type:

- Named schema

-

Use the dropdown list to select the name of a named schema previously defined in or imported into this module. The drop-down list is empty unless you have defined or imported at least one named schema for the current module.

When you select a named schema, its fields are loaded into the schema grid, overriding any schema fields already present. Once you import a named schema, the schema grid is dimmed and can no longer be edited. To restore the ability to edit the schema grid, re-select

Private Schemafrom the dropdown list. - Private schema

-

Populate the schema fields using one of these methods:

-

Define the schema's fields manually, using the

button to add a row for each schema field. You must enter values for the Field Name and Type cells. The Description cell

is optional. For example:

button to add a row for each schema field. You must enter values for the Field Name and Type cells. The Description cell

is optional. For example:

Field Name Type Description symbol string Stock symbol quantity int Number of shares Field names must follow the StreamBase identifier naming rules. The data type must be one of the supported StreamBase data types, including, for tuple fields, the identifier of a named schema and, for override fields, the data type name of a defined capture field. See Using the Function Data Type for more on defining function fields.

-

Add and extend a parent schema. Use the

button's Add Parent Schema option to select a parent schema, then optionally add local fields that extend the parent schema. If the parent schema includes

a capture field used as an abstract placeholder, you can override that field with an identically named concrete field. Schemas

must be defined in dependency order. If a schema is used before it is defined, an error results.

button's Add Parent Schema option to select a parent schema, then optionally add local fields that extend the parent schema. If the parent schema includes

a capture field used as an abstract placeholder, you can override that field with an identically named concrete field. Schemas

must be defined in dependency order. If a schema is used before it is defined, an error results.

-

Copy an existing schema whose fields are appropriate for this component. To reuse an existing schema, click the

button. (You may be prompted to save the current module before continuing.)

button. (You may be prompted to save the current module before continuing.)

In the Copy Schema dialog, select the schema of interest as described in Copying Schemas. Click when ready, and the selected schema fields are loaded into the schema grid. Remember that this is a local copy and any changes you make here do not affect the original schema that you copied.

Use the , , and buttons to edit and order your schema fields.

-

-

Optionally, document your schema in the Schema Description field.

Use the settings in this tab to enable this operator or adapter for runtime start and stop conditions in a multi-node cluster. During initial development of the fragment that contains this operator or adapter, and for maximum compatibility with releases before 10.5.0, leave the Cluster start policy control in its default setting, Start with module.

Cluster awareness is an advanced topic that requires an understanding of StreamBase Runtime architecture features, including clusters, quorums, availability zones, and partitions. See Cluster Awareness Tab Settings on the Using Cluster Awareness page for instructions on configuring this tab.

Use the Concurrency tab to specify parallel regions for this instance of this component, or multiplicity options, or both. The Concurrency tab settings are described in Concurrency Options, and dispatch styles are described in Dispatch Styles.

Caution

Concurrency settings are not suitable for every application, and using these settings requires a thorough analysis of your application. For details, see Execution Order and Concurrency, which includes important guidelines for using the concurrency options.

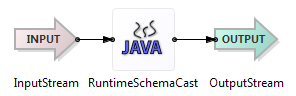

The operator has one input port and one output port to communicate with the surrounding application. The input port's schema is expected to have a capture field. The output port's schema is a transformation of the input schema, with the captured fields appearing as either top-level or nested fields, depending upon the Capture Field Strategy property value.

The Runtime Schema Cast operator fails to start if the transformed input schema does not match the schema specified in the Target Output Schema property.