Contents

StreamBase® is an in-memory transactional application platform that provides scalable high-performance transaction processing with durable object management and replication. StreamBase® allows organizations to develop highly available, distributed, transactional applications using the standard Java POJO programming model.

StreamBase® provides these capabilities:

-

Transactions — high performance, distributed All-or-None ACID work.

-

In-Memory Durable Object Store — ultra low-latency transactional persistence.

-

Transactional High Availability — transparent memory-to-memory replication with instant fail-over and fail-back.

-

Disaster Recovery — configurable cross-data center redundancy.

-

Distributed Computing — location transparent objects and method invocation allowing transparent horizontal scaling.

-

Online Application and Product Upgrades — no service outage upgrades for both applications and product versions.

-

Application Architecture — flexible application architecture that provides a single self-contained archive to simplify deployment.

StreamBase® features are available using Managed Objects which provide:

-

Transactions

-

Distribution

-

Durable Object Store

-

Keys and Queries

-

Asynchronous methods

-

High Availability

Managed Objects are fully transactional. Transactions are in-memory and support transactional locking, deadlock detection, and isolation. Serializable and read-committed snapshot transaction isolation is supported. Depending on the transaction isolation model, transaction locking supports single writer, multi-reader, with read-lock promotion. Deadlock detection and retry is transparently handled. Transactional isolation ensures that object state modifications are not visible outside of a transaction until the transaction commits.

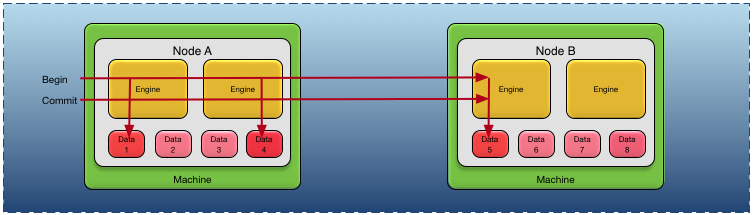

Transactions can optionally span multiple engines on the same or different machines. When a transaction spans machines, distributed

locking and deadlock detection is provided. For example in Figure 1, “Transaction scope”, a transaction is started on node A, modifies data from two different engines, communicates over a network to start a global transaction, and modifies data from

an engine on node B. All of the data modifications are atomically committed when the transaction completes.

All transactional features are provided natively by theStreamBase® runtime and do not require any external transaction manager or database.

Managed Objects are always persistent in shared memory. This allows the object to live beyond the lifetime of the JVM. Shared memory Managed Objects also support extents and triggers. There is optional support for transparently integrating managed objects to a secondary store, such as an RBDMS, no-SQL database, data grid, archival store, and so on.

Managed Objects can optionally have one or more keys defined. An index is maintained in shared memory for each key defined on a Managed Object. This allows high-performance queries to be performed against Managed Objects using a shared memory index. Queries can be scoped to the local node, a sub-set of the nodes in the cluster, or all nodes in the cluster.

Asynchronous methods allow applications to queue a method for execution in a separate transaction. Transactional guarantees ensure that the method is executed once and only once in a separate transaction.

StreamBase® provides these high availability services:

-

Transactional replication across one or more nodes

-

Complete application transparency

-

Dynamic partition definition

-

Dynamic cluster membership

-

Dynamic object to partition mapping

-

Geographic redundancy

-

Multi-master detection with avoidance and reconciliation

A partitioned Managed Object has a single active node and zero or more replica nodes. All object state modifications are transactionally completed on the current active node and all replica nodes. Replica nodes take over processing for an object in priority order when the currently active node becomes unavailable. Support is provided for restoring an object's state from a replica node during application execution without any service interruption.

Applications can read and modify a partitioned object on any node.StreamBase® transparently ensures that the updates occur on the current active node for the object. This is transparent to the application.

Partitioned Managed Objects are contained in a Partition. Multiple Partitions can exist on a single node. Partitions are associated with a priority list of nodes — the highest priority available node is the current active node for a partition. Partitions can be migrated to different nodes during application execution without any service interruption. Partitions can be dynamically created by applications or the operator.

Nodes can dynamically join and leave clusters. Active nodes, partition states, and object data is updated as required to reflect the current nodes in the cluster.

A Managed Object is partitioned by associating the object type with a Partition Mapper. The Partition Mapper dynamically assigns Managed Objects to a Partition at runtime. The Managed Object to Partition mapping can be dynamically changed to re-distribute application load across different nodes without any service interruption.

Nodes associated with a Partition can span geographies, providing support for transactionally consistent geographic redundancy across data centers. Transactional integrity is maintained across the geographies and failover and restore can occur across data centers.

Configurable multi-master, aka split-brain, detection is supported which allows a cluster to be either taken offline when a required node quorum is not available, or to continue processing in a non-quorum condition. Operator control is provided to merge object data on nodes that were running in a multi-master condition. Conflicts detected during the merge are reported to the application for conflict resolution.

A highly available timer service is provided to support transparent application timer notifications across failover and restore.

All high availability services are available without any external software or hardware.

A Managed Object can be distributed. A distributed Managed Object supports transparent remote method invocation and field access. A distributed Managed Object has a single master node on which all behavior is executed at any given time. A highly available Managed Object's master node is the current active node for the partition in which it is contained. Distribution is transparent to applications.

Class definitions can be changed on individual nodes without requiring a cluster service outage. These class changes can include both behavior changes and object shape changes (adding, removing, changing fields). Existing objects are dynamically upgraded as nodes communicate to other nodes in the cluster. There is no impact on nodes that are running the previous version of the classes. Class changes can also be backed out without requiring a cluster service outage.

Product versions can also be updated on individual nodes in a cluster without impacting other nodes in the cluster. This allows different product versions to be running in the cluster at the same time to support rolling upgrades across a cluster without requiring a service outage.

An application consists of one or more application fragments, or fragment, configuration files, and dependencies, packaged into an application archive. An application archive is deployed on a node, along with an optional node deploy configuration that can provide override configuration in the application archive and also provide deployment time specific configuration. Different application fragment types are supported, so an application can consist of homogeneous or heterogeneous fragments.